Following article is the first in a two-part series which aims to bring closer the idea of website caching, using tools such as Varnish, and show the way to get better results by applying a model based on ESI (Edge Side Includes). So let us start with the basics…

Caching – the basics

All technical people working with web applications are probably familiar with the basic client-server communication model. Each time a user visits any page on our website his request for desired content goes to the server which generates and sends back a response. „Each time” is a key statement here. One could quickly realize that every single visitor will see, for example, exactly the same home page, which means doing the exact same job by the server. Many, many times… and unnecessarily.

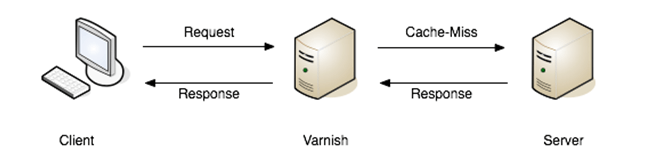

The solution is to add a caching layer to our architecture. Such layer can be achieved with Varnish – a reverse proxy acting as a middleman between client and a web application. Just like any other caching mechanism it’s job is pretty simple – store as much content in cache memory as it is possible because reading from such memory will always be faster than asking a source for the content (in our case the source being a web application server).

What do we gain by incorporating such solution? Applications we create can execute many demanding operations – with high computational or memory complexity or putting a noticable load on database servers. When we think about a single request it is hard to talk about too much pressure being put on the machines we use – after all modern computers can handle alot. However, once the number of concurrent visitors to our website increases the issue starts getting really problematic. Thanks to the caching layer most of the traffic won’t even reach our application!

Varnish – how it works

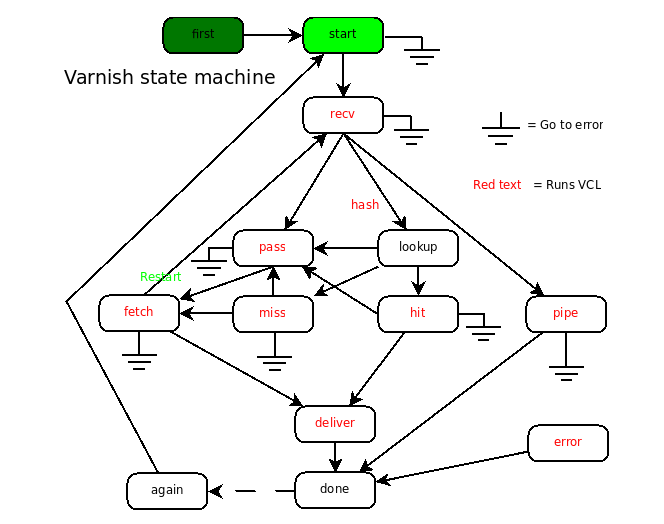

At the heart of Varnish lies a state machine written in C. It’s model is a bit more robust than that of standard caching mechanisms. The machine’s flow is shown on the graph below. What the graph depicts can slightly differ between individual releases of Varnish – for example at the time of writing the names of two states have changed in version 4.0.

Each state has it’s own responsibilities and by default executes logic shipped with Varnish engine (it is a C application after all). The states can be summarized as follows:

- RECV – entry point – here the most important is the decision on which path should be taken for current request.

- PIPE – „empty” run. Varnish does not partake in the communication process and simply passes along client request and server response.

- PASS – same as the last state except server response will be processed by actions executed for content served from cache memory.

- HASH – first step in the most common path which makes use of cache memory. Cache key is calculated here.

- LOOKUP – query to cache memory with the key prepared in previous step. Depending on query result one of two following actions will be taken.

- HIT – reaction to content being found in cache memory.

- MISS – fired when desired content is not present in cache memory or is outdated.

- FETCH – query to application server for content requested by the client.

- DELIVERY – serving of requested content, fetched from either cache memory or web application.

- ERROR – error handling.

Each summary merely describes tasks that should be handled by corresponding actions and what happens with default configuration. The authors of Varnish give us the possibility to use custom logic through VCL configuration files.

VCL

Varnish Configuration Language is a simple language prepared solely for the purposes of Varnish. It’s syntax does not diverge from programming languages popular these days and the list of it’s components is not extensive. As a result the language is easy to adopt yet functional enough to do it’s job.

VCL is a temporary language. Communication elements like client request, server response or cache object are all represented by objects. By operating on those objects Varnish can influence the communication between client and server.

VCL code is translated to C which in turn is attached to Varnish processes as libraries. This way our own functions replace default logic used in individual states.

The default logic

By default Varnish caches responses to all requests except:

- those of type different than GET and HEAD,

- containing authorization headers,

- containing cookies.

In spite of appearances that is a great chunk of traffic and in most scenarios the default behaviour will be enough as the application server does not have to serve static content like images anymore. However, it is worth to reconsider the last point on above list – cookies. Requests containing cookies will not be cached which is fully justified – the presence of a session cookie indicates that we are dealing with a visitor whose page can be personalized to some degree. In case of online stores the mini-cart is such an element.

One should ask if such small chunk of page, at least when compared to the whole content being served, should force us to abandon the boons of caching? The answer is no and as it turns out a mechanism exists that solves the problem. Salvation comes in the form of Edge Side Includes and to that will the next article be dedicated.

Read here tips on how to sell computer CPU.

Published December 24, 2014