In previous post we learned which tools could be used to check performance bottlenecks of PHP application – specially Magento. Now it’s time to deal with bottlenecks we found.

Application layer

We’re checking cache layer – we found that Redis is better choice for cache than popular Memcached. It works faster as Magento cache backend. Redis is harder to scale up (only master-slave replication is possible out-of-the box) – but for us it’s enough.

Probably the good choice for You will be to use Varnish proxy as we do. Not only for static content (product images, css’es – about 70% of HTTP requests could end-up on Varnish not hitting application server) but also for output caching of Magento-generated pages and blocks. If You use varnish-powered caching module You will be able to use ESI mechanism on Varnish to refresh dynamic blocks and cache all other page content.

In next step we added 2nd application server and use HAProxy as load balancerer. HAProxy is also replicated (to not cause SPoF) using IP-failover technique. Magento heavily uses app-servers because a lot of calculations are done in application logic; application loads hundrets of classes and php files (which causes heavy I/O load). Of course before adding servers we checked if APC code caching is ensured (using PHP5.5 is better to use native OPCache because APC in some cases is unstable and could cause SEGFAULTs).

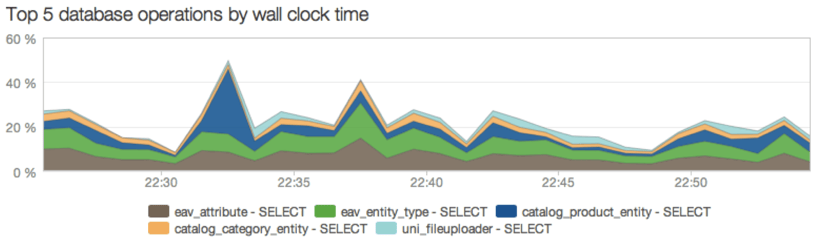

We added data layer caching to Mage_Catalog_Model_Product::load. Using caching on this low level method it’s crucial to perform cache-invalidation (using observers and cache taging). As we written in previous post – we cannot use flat tables. So each $product->load() causes a lot of SELECT queries on database to load adttributes (Magento uses EAV heavily). It’s worth to consider: adding cache to Mage_Eav_Model_Entity_Abstract which could by-pass EAV db operations.

As we have 2400 attributes – we were bounded to InnoDB limit of columns per table – in our cause about 900. So we’ve added 900 attributes to flat. Most popular attributes – whose are used to filtering, product listing etc. We noticed huge query-count decrease on DB server.

Sessions have to be stored in cache (Redis/Memcached) with fallback to databse (on write You could use DB and cache or queue writes). There A LOT of queries about session state so there is no sense of quering DB if You could do some fast checking in cache. Sessions are key-value stores.

Consider using Fast-Async Reindexing module. We’ve problems with many reindexations. These operations locks db (of course in InnoDB only one-record at time, but with huge record-sets this could be dangerous).

We checked Magento indexation code and it looks like this:

So – transaction starts (row is locked now) -> calculations are performed (could take even few seconds as we saw) -> row is unlocked. This is not optimal (in case of latency and locking). Some way to safely deal with this problem is to use master-slave replication of database. In this case – indexing is done on master (which is locked) but reads could be done from slaves (which are not locked). Replication uses binary-log copying so lock is ensured only for INSERT/UPDATE/DELETE operations – no additional locking time for computations.

Caveats

If You use multi-server environment probably You’re using some kind of Distributed File System (DFS) to handle user uploads, and code deployment between machines. This is OK – we’ve used GlusterFS. But You have to be carefull. On some kind of DFSes stat(), open() syscalls could be slower – really slower than on local FS. Gluster uses kernel IO buffers so in this case problem doesn’t exist.

APC is not 100% stable when used with PHP5.4. Some segfaults we discovered in logs. It’s better to use Zend Optimizer or OpCache (in PHP5.5)

Better try nginx+php-fpm than Apache. Main reasons? RAM usage, speed, stability. More on this here: http://info.magento.com/rs/magentocommerce/images/MagentoECG-PoweringMagentowithNgnixandPHP-FPM.pdf.

How to deal with EAV

If (as we) have problem with FLAT. You couldn’t turn it on or so – consider using tools like Lucene SOLR or Sphinx Search to bypass EAV on frontend. Removing EAV from Magento is hard – this mechanism is heart of Magento architecture. You could bypass it – for example – overriding search and filtering models on frontend. This allows You to use full-text search also. Ready-to-use modules for SOLR and Sphinx could be found without any problem.

What’s next?

Stay tuned. In next post in this serie – I describe how to deal with Database layer of Magento. This post will be the hard-one!

Published January 23, 2014